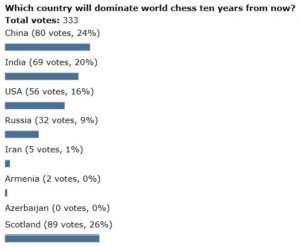

Last week’s question was: ‘Which country will dominate world chess ten years from now?’ And the answer is… Scotland?! Ignoring all those super-patriots and jokers, I will rule out a tartan charge to greatness, and view the true order as China, India, USA, Russia. Intriguing to see Russia so low in your rankings. Maybe the Russian conveyor belt of elite chess talent is slowing, if not quite grinding to a halt?

This week I am interested in FIDE ratings, inflation (or deflation?) and whether today is a golden era for elite chess. If you look at the Live Chess Ratings site, you will see four players rated over 2800, with Magnus Carlsen naturally first, on 2853. I believe Bobby Fischer peaked at 2785.

Even if you believe there has been inflation in the system (as I think most do) it’s still possible that today’s batch of top players represent a golden era of chess. Just one example: Pavel Eljanov’s recent brilliance at the Baku Olympiad and especially the Chess.com Isle of Man International have jumped him up to Number 16 in the world. Eljanov is a wonderful player, but the rating system says there are 15 even better. Has there ever before been such strength in depth in world chess?

There are many ways to frame a poll question to debate playing strength versus inflation. One option was “How many players will be rated over 2800 three years from now?” But I will go with another Jacob suggestion: “How does a 2500 player now compare with a 2500 player 20 years ago?” Stronger or weaker or about the same?

I don’t believe in inflation. Our knowledge about chess and about teaching chess has advanced a lot and players nowadays also have far more information available. So it’s not surprising that the top players nowadays play better than the top players of a few decades ago. They *should* have higher ratings.

Conversely, at amateur level, those advances hardly matter, so a 2000 player now plays more or less the same as a 2000 player at any moment since ratings existed. They may play different opening lines, but after move 10 they’re out of book and little has changed.

Kenneth Regan and Guy McHaworth did research on this, by running computer analysis on games (excluding the opening) by players of various rating ranges (paper called “Intrinsic Chess Ratings”). They found that ratings correlated with errors found by the engine, and found “Moreover, the final s_fit values obtained are nearly the same for the corresponding entries of all three time periods. Since a lower s indicates higher skill, we conclude that there has been little or no ‘inflation’ in ratings over time—if anything there has been deflation.”

@Remco G

Without taking an opinion, I should say that this sort of analysis has fallacy built straight into it. The computers change our way of thinking and voila, we think more like them than we did before they were around 🙂

Today’s 2500s: I’d say “at least equal strength, perhaps stronger”. They have much more opposition at lower levels. Coming back from summer tournaments in greece/crete, there were a lot of young, super-strong 2200s, computer-bred, very hard to beat. If this is the same everywhere, you need to be stronger than ever. In a sense, I’d say that our computer era is good for humans: fresh ideas, okay positions previously assessed as unplayable, pundit-free evals etc. More importantly, computers help mainstream players improve their tactical resilience (= not collapsing in worse positions), which previously was the mark of chess pros. Stronger humans now.

Think any judgement of games from different eras is hard to make clear comparisons from. Certainly back in the eighties a 2600 rating was a rarity and 2700 unheard of so a 2500 player was ranked pretty high on the ranking list at the time so certainly are ahead on that measure. Putting their games through a blundercheck compared to 2500 players today I’m sure would favour today’s players but they played according to who they faced across the board and there seemed to be more speculative sacrificial attacks as they knew they may succeed so it was an educated gamble. Think defensive skills have improved over the years so not sure Tal would be so successful with his sac strategy nowadays but it worked perfectly back then but I’m sure he would alter his style to fit with the change of circumstances so blunderchecking his moves misses the point. I still voted them as stronger now than before nevertheless.

@Jacob Aagaard

Their analysis seems to indicate that the 2500s of today play the same percentage of computer moves as the 2500s of the past, debunking that “fallacy”.

Your fallacy is just another way of saying we learned from computers and play better now.

Is there any objective argument to think there is rating inflation? I don’t think I have ever seen one.

@Raul

Did they take into consideration that we have much less time to make our moves now. Did they exclude the endgame, as it went from adjournment to rapid-play finish?

@Jacob: I don’t recall that they do, that’d be an argument for rating deflation.

@Remco G

I think it is obvious that there is inflation at the top. Also, it is obvious that the players are better than they used to be, simply because the training methods have improved as much as they have. Put a top Soviet player anno 1960 in a technically challenging endgame against Tomashevsky and he would have no chance.

So why do you think there is inflation at the top? If a top Soviet player anno 1960 would have no chance against Tomashevsky, then Tomashevsky should have a substantially higher rating than that top Soviet player, and he does.

I have never seen any evidence for inflation whatsoever.

To me it seems to be a myth perpetuated by people who mix up inflation and increasing depth at the top and by people who lionise the players of the past.

Lionising players of the past is understandable, but it would be very weird if players today wouldn’t be stronger, what with engines, databases, servers and much more common top tournaments.

The increasing depth at the top might be caused by computers allowing more people to create a top opening repertoire, but I’m not sure this process is still ongoing. I’ve been waiting for a rating list with more than fifty 2700 players for quite a while now, but it doesn’t seem to be happening.

Yes, I just looked up an old thread on chesspub; in september 2010 there were 40 2700+ players, now there are 41.

The following ChessBase article is from 2009, but Jeff Sonas was claiming there was inflation. It’s a long article and I have no idea how convincing it is.

https://en.chessbase.com/post/rating-inflation-its-causes-and-poible-cures

100% agreed with Phille. The top people are better today than the top people from 50 years ago in almost every competitive activity, why not chess? Especially given all the concrete reasons (some of which Phille mentioned) that players today could plausibly be better.

Sonas claims that the relatively flat curve of top ratings between 1975 and 1985 shows that people aren’t really getting stronger, but it would argue just as well against any other sort of mechanism for rating increase. A much simpler explanation is just that the general tendency of some number to go up doesn’t mean that it will go up every year, or even every period of ten years. You can see similar plateaus in the history of other competitive activities as well.

Just to be clear, I am not claiming that today’s players are inherently smarter or more talented than players of the past. Their actual moves on the board are stronger than those of the past because they have advantages like engines and databases and decades of opening theory. If you gave Jesse Owens modern running shoes, he’d run faster. That doesn’t mean that modern sprinters’ times are inflated (or deflated, I guess!).

The article from chessbase, see John`s link, comprises an explanation which I heard before and which I believe is correct. Mathematically the ELO equation does not create inflation, any rating increase reflects playing strength. However, there is a systematic threshold problem when determining the first rating, the init rating of a player… The following is from the article, right towards the end, and explains it well:

“Now let’s think about how it was back when the rating floor was 2200. Consider a hypothetical group of active players, all of whom have a performance rating of 2000 across all their games. Some of those players will certainly outperform their true 2000-strength for a short time, and others will underperform. Only those players from our group that outperform their true strength will make it onto the rating list, whereas the players who underperform will not be anywhere on the list. This means the players who show up on the rating list just above the rating floor, are (as a group) significantly overrated, just waiting to donate rating points to the rest of the pool. Even worse, while these overrated players keep temporary possession of their 2200+ ratings, other players may also receive inflated initial ratings as well, based partially on games against the overrated players. Over time, the overrated players will do worse than their ratings suggest, and their excess rating points will ultimately be distributed throughout the entire rating pool.”

Didn’t Keith Arkell say he would expect to beat Capablanca? Fischer in his final interview said some kid of 14 could get an opening advantage vs Capablanca and might even be able to outplay him from there.

Admittedly Capa was before Elo, but surely he would be higher than Arkell/ kid of 14.

Well, for me the problems with the Sonas article already start with the definition of inflation. To me inflation means that a player of constant strength would over time gain rating points. It doesn’t have much to do with the rating of the number X in the world rankings. Because that number depends on many very fuzzy factors.

For example I wouldn’t be surprised at all if the increase of his inflation proxy starting in the mid-eighties is actually a result of the Fischer-boom: Lots of kids who got fixed on chess in ’72 becoming strong GMs during the 80ies (just look at English chess scene). Or how did the fall of the iron curtain influence his inflation number? I’d say it should’ve kicked it up a notch even without any inflation. He doesn’t believe that increase in the number of rated players would result in more highly rated players (and he is probably right), but an increase in the number of players (i.e. of talents) should result in more highly rated players and he doesn’t look at that factor.

How many talents are funnelled into chess, how do the playing conditions of top players change, how do training possibilities of top players change, how does the knowledge distribution change? All these things confound his analysis and compared to the intrinsic performance ratings it’s just not very convincing.

Sonas uses some very strange mathematics in his rating calculations.

For example he calculates for some players ratings higher then their best performance ratings, and rating increases without playing in that period.

Some people seem to believe that the number that comes out of the Elo rating formula is some measure of how good the moves on the board are. It isn’t and there is no mechanism by which it possibly could be. Ratings only measure results of players against each other. No matter how much better modern players’ moves are, ratings should not be higher than they were historically. The fact that they are, means that there is rating inflation. The moves are doubtless also getting better over the same time period, but one does not measure the other and it would be a remarkable coincidence if they were increasing at the same rate (however you would measure that). So John’s question should not be “inflation or golden era”, but “is the inflation rate higher or lower than the increase in goldenness” (though I am sure a professional writer would not use such an ugly phrase).

One interesting possibility is that both rating inflation and improvement can be largely accounted for by the increase in the amount of serious chess being played. This would suggest that the two measures should at least be positively correlated, though how strong the correlation would be is debatable.

@Steve: if we assume that the great masses of club players are still playing basically the same game in basically the same way as ever, but strong players (and especially the top) are able to make use of advances in knowledge about chess and chess training, then the gap in skill between the masses and the top is larger than it used to be, so ratings at the top should be higher.

@Steve

Of course Elo does not directly measure move quality (if it did, no one would have this argument!); it just predicts the expected results of games between players, who of course are always playing each other in the same year as each other. The question of rating inflation is whether ratings from different periods are roughly comparable or not. Of course, there is no a priori reason that they should be, as there are many reasons that ratings could drift. But all the studies that have been done, as far as I can tell, indicate that, say, a 2600 player from 2016 and a 2600 player from 1976 would have fairly equal chances in a match, even though the 1976 player would have been about a world #5 then and the 2016 player would be about a world #100 now.

(I meant 2650, not 2600, in that example.)

@Bulkington

The problem with this argument is that it only makes sense for a very small subset of players, namely for those that have a strength of maybe 2100, over perform to get a 2200 rating, shed their additional points to rating pool and then get run over by a truck.

Luckily that’s not what usually happens.

Instead players who get a lucky first rating of 2200 tend to improve further. And once they have reached an actual strength of 2200 they have sucked all the donated rating points back into their own rating. And if they keep improving they’ll actually suck more and more points OUT of the rest of the rating pool. And then they get run over by a truck …

So the real question isn’t whether players who enter the pool are overrated. The real question is whether players who leave the rating pool take points with them or leave points behind.

@ Paul H : it would be interesting to know Keith’s arguments .

The ultimate experience could be the freeze of a 2500 GM and his defrost in 20 years .

Anyone interested ?

The Jeff Sonas article makes zero sense to me.

He makes up his own weird definition of rating inflation, namely “the rating of the #X player on the rating list has increased over time” and then goes: See, this is exactly what happened, there is rating inflation.

This is of course also exactly what you would see if the level of chess increases over time.

@Remco G

And the ratings lower down should be lower, and there should be a more positively skewed distribution. Is either of these true? I have never seen any evidence to suggest so.

My personal experience is that there has been a tremendous improvement at the lower levels since the seventies. Everything in chess (knowledge of openings, moddlegame and endings) has gone up and the overall performances are now sometimes superb.

@Steve

The problem is that the rating floor has been changed several times and even without a change in the rating floor things like a varying rate of new players mess up the distribution of lower and higher ratings. If the rating pool had a stable number of players and a stable distribution of ratings we wouldn’t have this discussion. In that case we could just look at how the mean changed over time.

@Phille

Except for the part with the truck I tend to disagree. Let`s say we have a closed group of rated players playing against each other, then inflation is impossible because the sum of all rating points stays the same all the time. This includes scenarios where members dramatically improve their playing strength and rating because others will correspondingly loose rating points. The sum stays always the same, therefore no inflation.

Now, for simplicity let`s presume the members are in a steady state, constant rating, and I being overrated enter the group. Then the difference between my true rating and the overrated number will be distributed among the group members. As as consequence there rating rises without a corresponding improvement of their playing strength. That is inflation.

True, I might improve my playing strength and get my lost points back, but this does not take back the effect of the wrongly introduced number of rating points. It merely impacts the distribution of the inflated overall sum of rating points.

It would not be a problem if there were no threshold to overcome for getting the FIDE rating (currently it is 1600 ?). Then over-rated and under-rated candidates would average out. But the current system creates more over-rated candidates which creates inflation.

Chess went through a boom-phase over the last decades with lots of initiallly over-rated FIDE ratings so I believe the effect is serious.

@Steve: the number of club players is so huge compared to the number of top players, that you don’t see the average ratings of club players go down.

@Pinpon

“Perhaps the most strident advocate of the rising standards theory is GM Keith Arkell. He’s quite militant in his views and is of the opinion that players like Capablanca and Alekhine were barely 2400 strength. He thinks if they came back now, they’d struggle to beat IMs. He thinks the great Aron Nimzowitsch would barely scrape a 2200 rating. Controversial views, no doubt, but thought-provoking.”

– GM Danny Gormally

Source: Chess Monthly Feb. 2012

I think to be correct you really need to consider what, e.g., Capablanca’s results would be today with six months of modern training methods. Obviously someone who hasn’t studied in twenty years wouldn’t have an up-to-date opening repertoire.

Do you consider rating as a photo or a film ?

Probably a film – if you are not a youngster – as it sums up all your previous results

If the case , one should consider rating+age comparisons , i.e. 50 y.o. 2500 GM 1996 vs 50 y.o. GM 2016

As a rather unscientific but (I believe) valid approach, I look at the moves and ratings of the top ten players today versus the top ten of 30-40 years ago. Today’s top ten are (generally) far higher rated, so the question becomes do they play better moves? And the answer seems to clearly be yes. While the top ten of 30 years ago were capable of some remarkably impressive games (e.g., Fischer vs. D. Byrne) the overall quality of their moves was not comparable to the top ten today. They played some incredible moves, and consistently played reasonably good moves, with an occasional sub-par move.

Today’s top ten occasionally play some incredible moves, and consistently play very good moves. They play like computers, with almost never any mistakes. Maybe they don’t always play the absolute best move, but they do play the absolute best with remarkable frequency and almost never play a move that is much less than the best.

If the players from 30 years ago were playing today they would simply get killed on consistency. It’s no longer enough to play mostly good moves – they all have to be great!

@ Paul H : Thanks .

Seems a bit harsh for me . With this theory , Philidor would be 2100 at best but how many 2100 players could solve R+B vs R Philidor position from scratch ? Probably none .

On the contrary, I think it is very important to explicitly not consider the effect of modern training methods on historical players (when comparing ratings). We are comparing how well people actually played, not how smart they were.

If we defined rating to mean how well someone would play if they had modern technology, then of course contemporary ratings would be inflated (or historical ratings would be deflated), and no one would be arguing here 🙂

No one thinks less of Jesse Owens for not having had modern running shoes, but his results remain what they were.

I have been playing chess since 1983 in the 2200 – 2300 FIDE level and am starting to play more actively again against 2200 – 2500 level competition. The players today are simply better than 30 – 40 years ago. To maintain my rating, I am having to improve, a lot!

Having said, that for an Arkell to say a Capa would only be 2400, that is ridiculous. Emanuel Lasker competed with Botvinnik, who competed with Fischer and Smyslov and Smyslov competed with Kasparov at age 60+. The elite were good and would be elite in any era.

What is different, is that chess is more popular today than even 10 years ago. Many kids have strong trainers and full parental support. So, the pool of players is larger and the pool of players with high level training is much larger. Hence, at all levels, the players are better. The system is a bell shaped curve, so it is harder to become 1800 – 1900 because more are trying. Players without the training play like they used to. But, the gap is bigger between these players and the masses. Because there are more players, a few, Carlson, Caruana, Kramnik, etc. have set new or near all time rating highs. This is not inflation, but, simply a reflection of more players and their true strength.

It’s game of information.

We have more information today.

It’s more a game of concentration und resilience. But that’s also better today.

@Doug Eckert

Arkell’s claim is given some credence by a lengthy essay in John Nunn’s Chess Puzzle Book. It compares 2 top level tournaments: 1911 Karlsbad and 1993 Biel Interzonal. Nunn was shocked at the volume of blunders in the 1911 tournament and concludes that the implied average player rating for the tournament was only around 2130. John Watson includes extended excerpts from that essay in a review of it at http://theweekinchess.com/john-watson-reviews/historical-and-biographical-works-installment-3

@AlexRelyea: but take almost any player with a rating today, give him six months of full time training, and he’d be much stronger too. Unfair to give only Capablanca that.

@Bulkington

You use a different definition of inflation.

To me inflation is when players of constant strength get higher ratings over time.

Unfortunately your definition of inflation is pretty unpractical because the global player pool changes constantly in a variety of ways.

And consider this case: You have a subpool of players in some far off village who only play each other for a decade. During this time they improve considerably. According to your definition there would be no deflation in that subpool (the sum stays the same) and there would be no de/inflation in the global pool.

According to my definition there would be deflation in the subpool and this would become obvious once they play an international tournament, because they would massively overperform.

Now tell me, which definition is actually useful to compare the strength of players from different subpools (say, top players in the eighties and top players today) by their rating?

And nobody thinks the winner of the average high school mile race is better than Roger Bannister at his peak. I stand by what I wrote.

@Alex Relyea

Fair enough. It’s useful to have clarified that we’re using different definitions, since it allows us to realize early on that there’s no point arguing. I do agree (and I think anyone would) that using your definitions there has obviously been rating inflation.

Technically, if everybody else is improving but you keep your strength constant, then you will loose rating points. But that is neither deflation nor inflation. As Steve, I guess, already mentioned, the rating does not measure absolute strength but strength relative to other participants.

The far off village example is a nice one, but this does not create deflation but creates under-rated players. The system will always comprise underrated and overrated players, but let`s not confuse this with inflation and deflation. Improvement by training or decay by dementia will not create inflation or deflation. It is not possible to create inflation from inside the system because it is a zero-sum-game.

The problem occurs when you enter the system being over-rated. Example: Ten players with 2500 and equal strength, steady state. Now we add another ten players with the same strength to the system, but instead of an init-rating of 2500 they get an init rating of 5000. Now you let them play, all have equal strength. After a while they all will end up with a rating of 3750. That is inflation, also according to your definition.

<blockquote…

David, I read the article previously and now again regarding the 1911 tournament. I enjoyed it and don’t disagree with the conclusions about the overall field in 2011 and the blunders. But, there are a couple of points. First, when you look at the fields in those early tournaments, there were a couple elite players and then the players were more or less normal masters like myself. However, I will stand by my comment that the greats of the past could compete today, albeit, they may not become world champion. There are enough games between the generations to support that conclusion of which I tried to give a few examples of many that exist. Finally, this demonstrates how much the talent pool has grown. With a larger talent pool, there will arise players who are simply better. The simply better suggests ratings are not inflated, but reflect accurately relative strength. There was another study estimating the ELOs of past players. I can’t find it right now. But, I thought it had many of the prior world champions, Lasker, Alekhine, Euwe, Bottvinnik etc. in the high 2600 rating range. That puts them a shade below elite today, but makes them highly competitive GMs. That does not seem too far off. Armed with greater knowledge, 1 or 2 of them would likely have been 2750+. Obviously we will never know for sure…

Hi,

Fide and their calculation of ratings made it more absurd than ever. I know several “fide masters” of today that got the title playing in kids tournaments and quickly got to 2300 and after started to play opens dimished their ratings to 2000. Fide also introduced CM title bexause they are interested to get money from people playing chess. Quantity over quality.

Of course people in general got higher ratings because there is a lot of tournaments and fide k is 40. It would be better to get fide rating after you played 100 elo rated games than to get elo according your performance. In this case average world elo wouldnt be so hugh as in 80ies.

Elite players play round robins and they hardly loose elo points.

I believe we are in a golden age and that people are far stronger today than before.

That is hardly surprising – the greats of today have stood on the shoulders of giants.

In virtually all disciplines, people get better as knowledge accumulates. With computers one can access all the games of the greats, can learn pawn structures, plans, tactics and endgames so much faster than before. As people train with computers, their defensive skills change and they learn to bend the rules a lot more, as computers have shown time and time again (and John Watson).

I’m not sure it is helpful comparing Capablanca with Carlsen anymore than it is comparing Mozart with Bach before him or Mahler after him. They are products of their time and era and theri greatness must be seen in context likewise. Just playing over games from the 70s and even 80s and playing over modern games you get the sense of how much tougher chess is today.

We should celebrate both the great players of today (of which we have many) and the great players of the past for their achievements and what we have shown to us and how they have inspired us.

@Bulkington

Well, as I said, you are using a different definition.

Usually these discussion about inflation revolve around questions like “Is Giri really stronger than Fischer?” and of course your definition of inflation has nothing to do with this question, while mine does.

But even in the context of your definition, the explanation for the existence of inflation doesn’t make any sense. If a players enters your hypothetical fixed pool of players and then exits this pool again, it is irrelevant whether he was overrated when he entered. The only thing that matters is whether his exit rating is higher than his entry rating or not. The pool will be the same again, if the players rating has risen during his time the sum will be lower, if it has fallen the sum will be higher. And of course he doesn’t really have to exit the pool for this effect, that’s just to get back to your fixed pool and its sum.

Reading your example it seems to me that you completely ignore the fact that most players strength and rating changes over time. New players don’t enter in the middle, they enter at the bottom. That’s extremely relevant because it means that they have been improving until they reached that lower limit at least for one performance. This implies that they will often keep improving, which is exactly my point. Once their strength reaches that lower limit the effect of being overrated at entry on the rest of the pool is completely gone.

@Bulkington

“Improvement by training or decay by dementia will not create inflation or deflation.”

So, if in your example the new players actually have the strength 5000 when they enter the pool, and then suffer a sudden case of dementia and fall to the strength 2500, then it’s not inflation? You must confess that’s absurd. It’s the exact same scenario.

To be consistent in your definition you’d have to find out for every falling rating whether it was caused by being overrated or by getting weaker. Because for the numbers it doesn’t make a difference. The only thing that makes a difference is whether the rating is rising or falling.

@Phille

If an over-rated player enters the group and starts playing then his rating adapts and the inserted dummy-points are distributed and create inflation, no matter with whatever rating he will leave the group.

Exits could also create inflation or deflation as they change the sum of points in the pool. But exits are random, so the over-rated and under-rated exits average out. That is not the case for the entries, where the current system creates more over-rated entries than under-rated entries because of the threshold.

Regarding the example, maybe I was unclear: the added players have a steady-state strength corresponding to 2500 points but upon entering the system they are over-rated having a rating of 5000 points. In other words, 25000 dummy points are added to the system. One can compare this to a country printing more money. The result is inflation.

Anyway, was fun to discuss the details even if we are not on the same page here.

So Giri vs Fischer. I remember the movie “Back to the Future” when in 1955 McFly, coming from 1985, shows this crazy guitar performance on stage. Nobody understood the stuff but Chuck Berry was listening and felt inspired. Therefore I think Mr. Fischer would feel inspired, to say the least.